Getting Started with Landbot

Introduction to Landbot 🌍

Creating & Setting Up Your Account 👥

How to create your Landbot account, set it up and invite teammates

Common reasons for not receiving account activation email

Trial period

Account Settings

Build Your First Bot 🛠️

Builder Interface Tour

Getting started - build a bot

Managing Data in Your Agent: A Guide to Using Fields

Languages and Translations in Landbot

Organize your flow with Bricks

Using Flow Logic in Landbot

How to Test Your Bot - Complete Guide 🧪

How to "debug" (troubleshoot) your bot's flow to spot possible errors (for non coders)

Starting Point

How to Import a Chatbot Flow Without JSON – Use "Build It For Me" Feature

Launch and Share Your Bot 🚀

Build a bot

Bot's Settings

Bot General Settings

Web bots: Second Visit Settings

Custom System Messages

Hidden Fields (Get params / UTMs from url and use it as variables)

Landbot native SEO & Tracking tools

Typing Emulation (Message Delay)

Messages, Questions and Logic & Technical blocks

Messages

Media Block

Media block

How to display images with a variable URL source

How to embed a .gif file inside a message

Different ways to embed Videos in Landbot

Display video and hide button to continue until video has ended

Send a Message block - Simple Message

Goodbye block

Question blocks

Date Block

Scale Block

Buttons block

Ask for a Name block

Ask for an Email block

Ask a Question block

Ask for a Phone block

Forms block

Multiple-Choice Questions with the Buttons Block

Question: Address block

Question: Autocomplete block

Question: File block

Question: Number block

Question: Picture Choice block

Question: Rating block

Question: URL block

Question: Yes/No block

Logic & Technical blocks

Code Blocks

Dynamic Data

How to Use the Dynamic Data Block in Landbot

Get the array's index of the user selection and extract information from array

Formulas

How to Perform Basic Calculations

Get started with the Formulas block

Formulas - Regex

Formulas - Date

Formulas - String

Formulas - Logical

Formulas - Math

Formulas - Object

Formulas - Comparison

Formulas - Array

Persistent Menu

Trigger Automation

Webhook

How to Use the Webhook Block in Landbot: A Beginner's Guide

Webhook Block Dashboard

Webhook Block for Advanced Users

Landbot System Fields: Pre-created fields

Set a Field block

Any of the above Output

Global Keywords 🌍

Keyword Jump

Lead Scoring block

Jump To block

AB Test

Conditions block

Conditions block II (with Dates, Usage and Agents variables)

Close Chat block

How to ask a question based on a variable not being set (empty URL params)

Business Hours block

Custom Goals

Share & Embed

Redirect Users

How to redirect visitors to a URL (web only)

How to add a Click-to-Call/Email/WhatsApp button

Redirect User Based on Language Input (DeepL)

Generate a URL that has variables from user answers

Popup on Exit Intent

Share

Customized Embed Actions

How to redirect user to another url in your site with Livechat open to continue conversation

How to Detect Visitors Browser

Customize and embed your WhatsApp Widget

Modifying Embed Size

Detect if bot was opened

Customized Behavior in Mobile Browsers

Load script and display bot on click button

Launch Bot On Exit Intent

Display Bot During Business Hours Only (Livechat & Popup)

Open / Close a Web bot (embedded)

Launching a bot depending on browser language

How to pass WordPress logged in user data to Landbot

Set the flow depending on the url path (for embedded landbots)

How to launch a Landbot by clicking a button

Open LiveChat bot as soon as page loads

Detect if a visitor is on Mobile/Tablet or Desktop

Embed

Embed your bot into your website and use a custom domain

Embed Landbot in an iframe

Landbot in Wix

Landbot in your web with Google Tag Manager

Landbot in Webflow

Embed in Sharetribe

Landbot in Shopify

Embedding Landbot in Carrd

Landbot in Wordpress

Landbot in Squarespace

Customizing the Proactive Message

Design section (web bots)

Verification & Security

Validate phone number with SMS verification (with Vonage Verify)

Cookie consent banner (full page / full page embed)

Add Captcha Verification (Non-Embedded Bots)

Bricks

How to disable a bot

Account Settings and Billing

Billing

Privacy and Security

Teammates

Startup Discounts

NGOs and Educational Organizations Discount

AI in Landbot

Landbot AI Agent

AI Agent - Interactive components

AI Agent Block

AI Agent Setup - Best Practices

Tips to migrate from old AI Assistants to AI Agents

How to create custom Instructions for your Landbot AI Agent with AI (ChatGPT, Claude...)

AI Agent In Action - Live Implementation Example

Capture, generate and use data with AI Agents

Structuring your AI Agent Knowledge Base

Custom AI Integrations

Create a JSON format response from OpenAI in WhatsApp

Responses API

Connect OpenAI Assistant with Landbot

AI in WhatsApp

How to build a FAQ chatbot with GPT-3

GPT-4 in Landbot

OpenAI

Prompt Engineering for GPT-3

Build a Customer Service Bot with ChatGPT and Extract Information

Google Gemini in Landbot

Build a Chatbot with DeepSeek

AI Task block Overview

Integrations with Landbot

Native Integrations

Airtable

Airtable integration block

Get data filtered from Airtable with a Brick- Shop example

20 different ways to GET and filter data from Airtable

How to add/update different field types in Airtable (POST, PATCH & PUT)

How to Create, Update, Retrieve and Delete records in Airtable (POST, PATCH, GET & DELETE)

Get more than 100 items from Airtable

Insert Multiple Records to Airtable with a Loop

How to Get an Airtable Token

Advanced filters formulas Airtable block

Airtable usecase: Create an event registration bot with limited availability

Update Multiple Records in Airtable Using a Loop

Reservation bot with Airtable

Calendly

Dialogflow

Dialogflow & Landbot course

Dialogflow & Landbot intro: What is NLP, Dialogflow and what can you do with it?

Dialogflow & Landbot lesson 1: Create your first agent and intent in Dialogflow

Dialogflow & Landbot lesson 2: Get the JSON Key

Dialogflow & Landbot lesson 3: Setting up of Dialogflow in Landbot

Dialogflow & Landbot lesson 4: Training phrases and responses for a FAQ

Dialogflow & Landbot lesson 5: Entities and Landbot variables

Dialogflow & Landbot lesson 6: Redirect user depending on Dialogflow response parameters (intent, entities and more)

Integrations > Dialogflow Block

How to extract parameters from Dialogflow response with Formulas

Dialogflow Integration Dashboard

Dialogflow in Unsupported Languages (& Multilingual)

Dialogflow - How to get JSON Key

Google Sheets

Google Sheets Integration: Insert, Update and Retrieve data

How to use Google Sheets to create a simple verification system for returning visitors

How to give unique Coupon Codes (with Google Spreadsheets)

Google Sheets Integration Dashboard

How to use Google Spreadsheet as a Content Management System for your bot

Hubspot

MailChimp

Salesforce

Segment

SendGrid

Send an Email

Sendgrid Integration Dashboard

How to create a custom SendGrid email - (Custom "from" email)

Slack

Stripe

Zapier

How to Configure the Landbot and Zapier Integration Using the Zapier Block

Zapier Integration Dashboard

How to insert a row to Google Spreadsheet by Zapier

How to generate a document with PDFMonkey by Zapier

Send WhatsApp Templates from Zapier

How to Send Emails from Your Landbot Using Gmail via Zapier

Get Opt-ins (Contacts) from Facebook Leads using Zapier

How to extract data from an external source with Zapier and use it in Landbot

Zapier trigger

How to complete a digital signature flow by Zapier

Make a survey with Landbot and display the results in a Notion table using Zapier

Custom Integrations

ActiveCampaign

Google Calendar

Google Fonts

Google Maps

Embed Google Maps

Google Maps API Key for Address block

Extract Data With Google Maps Geocoding API

Calculate Distances With Google Maps API

Google Meet

IFTTT

Integrately

Intercom

Make

Connecting MySQL with Make.com (formerly Integromat)

Send WhatsApp Message Template from Make

Make Integration With Trigger Automation Block

How to send an email through Sendinblue by Make.com (formerly Integromat)

Get Opt-ins (Contacts) from Facebook Leads using Make

How to extract data from an external source with Make.com and use it in Landbot

OCR

Pabbly

Paragon

Pipedream

PDF Monkey

Store Locator Widgets

Xano

Zendesk

Send an Email with Brevo

Shopify in Landbot: Get Order Information

How to integrate Landbot with n8n

How to Integrate Landbot with n8n using Webhooks

WhatsApp Channel

Getting started!

WhatsApp Testing

Build a WhatsApp Bot - Best Practices and User guide

Build a WhatsApp Bot - Best Practices for Developers

Types of Content and Media you can use in WhatsApp 🖼

1. WhatsApp Article Directory

WhatsApp Integration & Pricing FAQ

Adding & Managing your WhatsApp Channel

Meta Business Verification - Best Practices 🇬🇧

WhatsApp Number Deletion (WA Channel management)

Adding a WhatsApp number to your account

WhatsApp’s Messaging Policy: New Accepted Industry verticals

Meta processes guide: Meta Business Verification, Official Business Account (OBA) requests, Appeals

Additional Number integration: Limitations and Requirements (Number integration)

Existing WhatsApp Number Migration

Key Insights for Migrating to WhatsApp Business API Cloud

How to's, Compatibility & Workarounds

WhatsApp bots - Feature Compatibility Guide

WhatsApp - How to direct a user through a different bot flow on their second visit

WhatsApp - Get user out of error message loop

How to do Meta ads conversion tracking in WhatsApp bot using the Conversion API

Getting Subscribers: Opt-in, Contacts

How to get Opt-ins (Contacts) for your WhatsApp 🚀

WhatsApp Quality - Best Practices

Opt-In block for WhatsApp 🚀

Opt-in Check Block

Contact Subscribe Block: Manage Opt-ins and Audiences

New Contacts: Import, Segment, and Organize Easily

WhatsApp Channel Settings

Parent Bot/Linked Bot - Add a main bot to your WhatsApp number

WhatsApp Channel Panel (Settings)

Growth Tools for WhatsApp

Messaging and contacting your users

WhatsApp Campaigns 💌

WhatsApp's Message Templates

Audience block

WhatsApp Marketing Playbook: Best Practices for Leadgen

WhatsApp Error Logs: Troubleshooting guide

Audiences

WhatsApp for Devs

How to calculate the number of days between two selected dates (WhatsApp)

Creating a Loop in WhatsApp

Recognise the users input when sending a Message Template with buttons

Trigger Event if User Abandons Chat

Calculate Distances in WhatsApp

Send Automated Message Templates based on Dates

How to Let Users Opt-Out of Your WhatsApp Messages via API

reply from Slack: How to create an integration to allow agents reply WhatsApp users from Slack (with Node JS)

Set Up a Delay Timer in Bot

Notify Teammates of Chat via WhatsApp

Native blocks for WhatsApp

Reply Buttons block (WhatsApp)

Keyword Options 🔑 Assign keywords to buttons (WhatsApp and Facebook)

List Buttons Block (WhatsApp)

Collect Intent block

Send a WhatsApp Message Template from the Builder

WhatsApp Changes to Message Limits starting October 7, 2025

Other Channels - Messenger and APIChat

Facebook Messenger

The Facebook Messenger Ultimate Guide

Types of content you can use in Messenger bots 🖼

How to Preview a Messenger bot

API Chat (for Developers)

Human Takeover & Inbox

Metrics and Data Management

Metrics Section

How to export the data from your bots

Export data: How to open a CSV file

Bot's Analyze Section

For Developers & Designers

JavaScript and CSS

CSS and Design Customizations

Design Customizations

Advanced (Custom CSS & Custom JS)

Components CSS Library Index

Background Class CSS

Identify Blocks CSS

Buttons Class CSS

Header Class CSS

Media Class CSS

Message Bubble Class CSS

Miscellaneous Classes CSS

CSS Customization Examples: "Back to School" Theme

Get started guide for CSS Design in Landbot

CSS Customization Examples: Call To Action: WhatsApp

CSS Examples: Lead Gen

CSS Customization Examples: "Translucid"

CSS Customization Examples: "Minimalist" Theme

Dynamic Data CSS

Form Block CSS

CSS for Typewriter Effect

CSS Customization Examples: Carrd Embed Beginner

Dynamically Change a Bot's Background

Proactive Message Customizations with Javascript and CSS

Landbot v3 - Web CSS - RTL

CSS Customization Examples: Video Bubble

Dynamically Change Any Style

CSS Customization Examples: CV Template

Change Landbot custom CSS dynamically from parent page onload

Widget/Bubble Customizations with Javascript and CSS

JavaScript

How to change Avatar dynamically

Javascript in WhatsApp

Landbot JavaScript Integration

Different ways to format numbers with JS

How to display an HTML Table and a List in Landbot v3 web

Trigger a Global Keyword with JS (web v3)

Create Dynamic Shopping Cart with JS and CSS

Add a Chart (with Chart JS library) in your Landbot

Different ways to format numbers with JS (WhatsApp)

Pop up modal to embed third party elements

Landbot API

Send WhatsApp Messages with Landbot API

How to "send" a user to a specific point in the flow with Javascript and with the API

APIs

Get Opt-ins (Contacts) using Landbot API

MessageHooks - Landbot Webhooks

Resume flow based on external process with Landbot API (Request, Set, Go)

Tracking

Google Analytics - Track Events (Not embedded)

Google Analytics - Track Events (Embedded)

Meta Pixel - Track Events (only Embedded)

How Track Google Analytics Events in Landbot with Google Tag Manager (GTM)

Google Adwords - How to track Google Adwords in Landbot

Set a timer to get the time spent during the flow

Workarounds and How To's

Workflows

How to build an event registration Landbot (to be used in one screen by many attendees) (web only)

How to let user select a time of booking (with a minimum 45 minutes notice)

Send Files Hosted in Landbot to Your Google Drive with Make

Two-Step Email Verification

Fixing Web Bot Loading Issues for iOS Devices in Meta Campaigns with Disclaimers

Progress Bar Workaround

Skip Welcome Message

How to Add User Verification to Your Chatbot

How to set up questions with a countdown

HTML Template for Emails

Creating a Simple Cart in WhatsApp

Creating Masks for User Input (2 examples)

More Topics

Table of Contents

- All Categories

- AI in Landbot

- Custom AI Integrations

- Build a Customer Service Bot with ChatGPT and Extract Information

Build a Customer Service Bot with ChatGPT and Extract Information

Updated

by Abby

Updated

by Abby

In this post we will see how you can create a Landbot connected to the OpenAI API, which can have a free and natural conversation with the user, and will retrieve the information you need. We will imagine that you are a food delivery company that needs a customer support bot that attends to customers with delivery incidents. The only objective of the bot is to retrieve what is the incident about, the order number and the user email and finish the conversation when it accomplishes its goal. To do so we will need to adapt the input we send to the OpenAI API with what it expects to receive. Let’s see how to do that.

If you'd like you can skip ahead and download this template. Or, if you have a WhatsApp bot, or want to use this in an existing flow, you can import our brick:

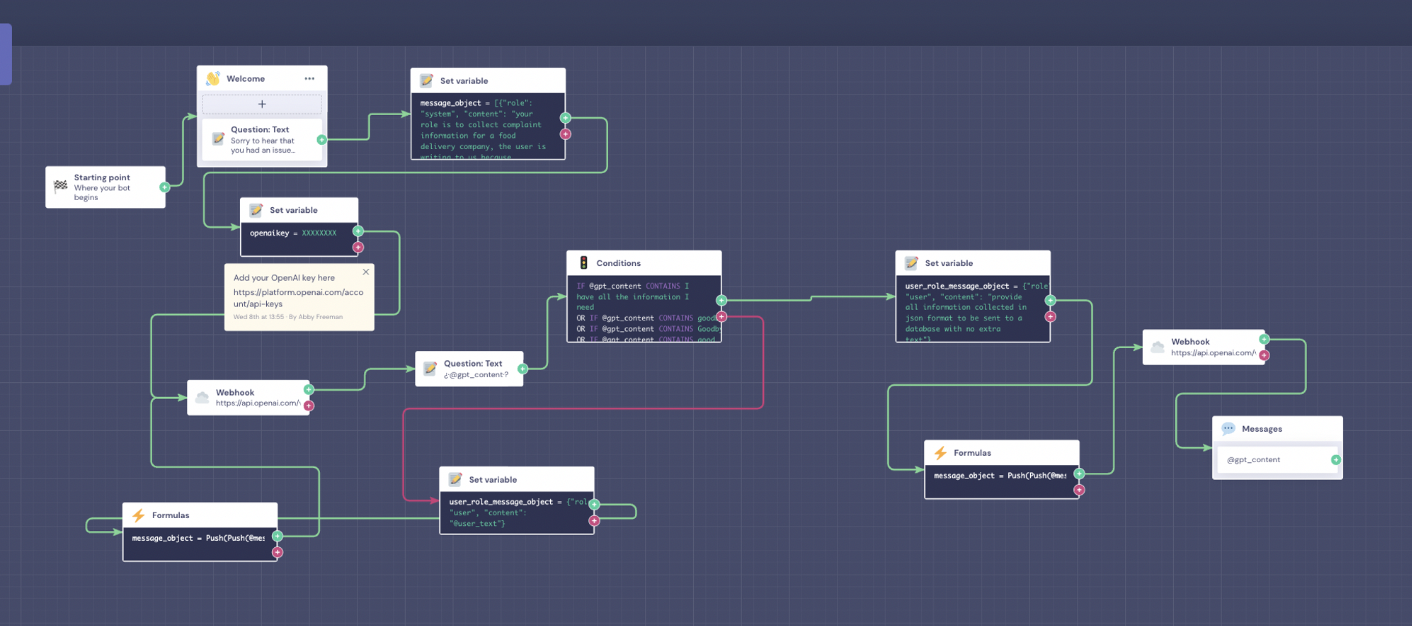

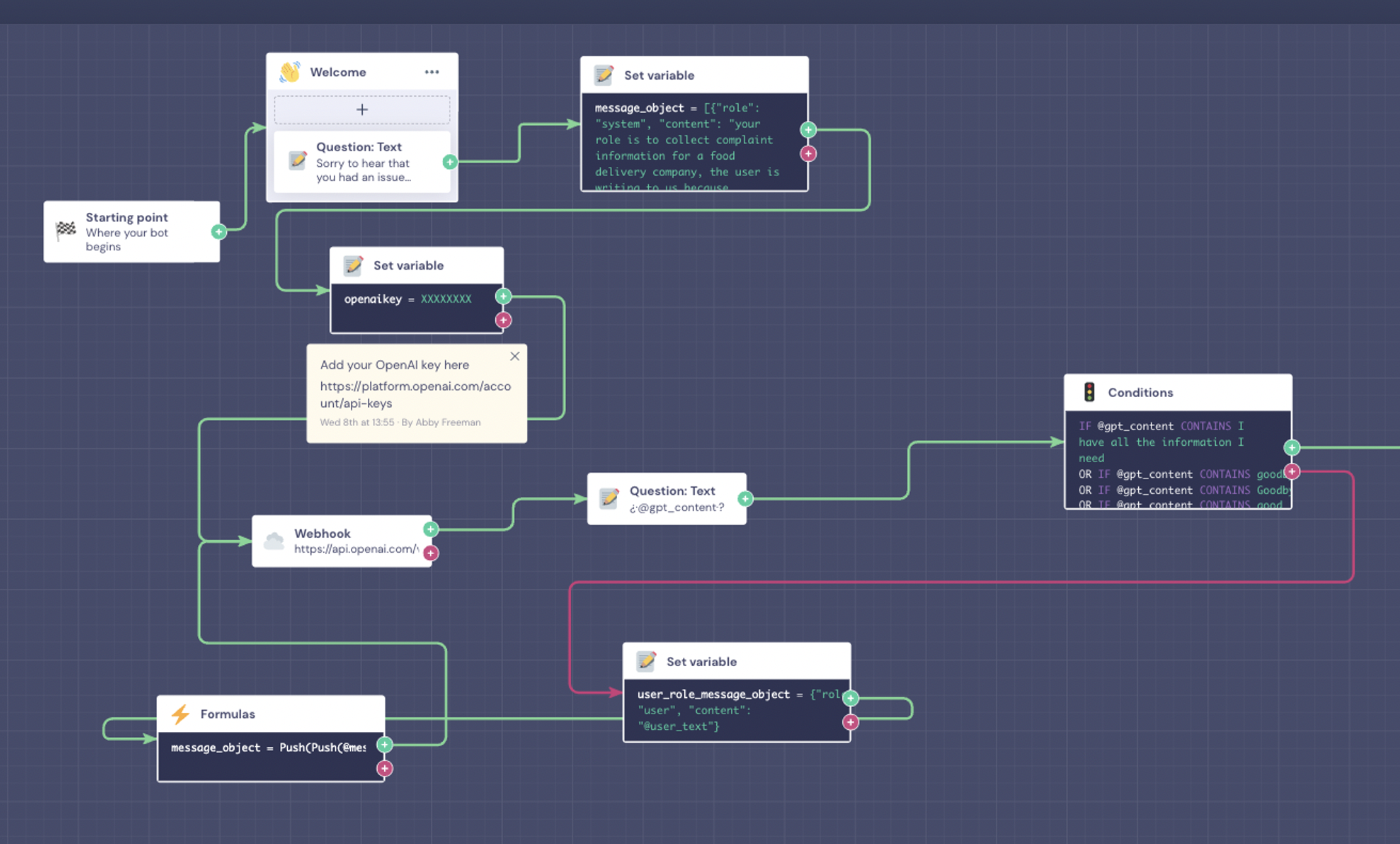

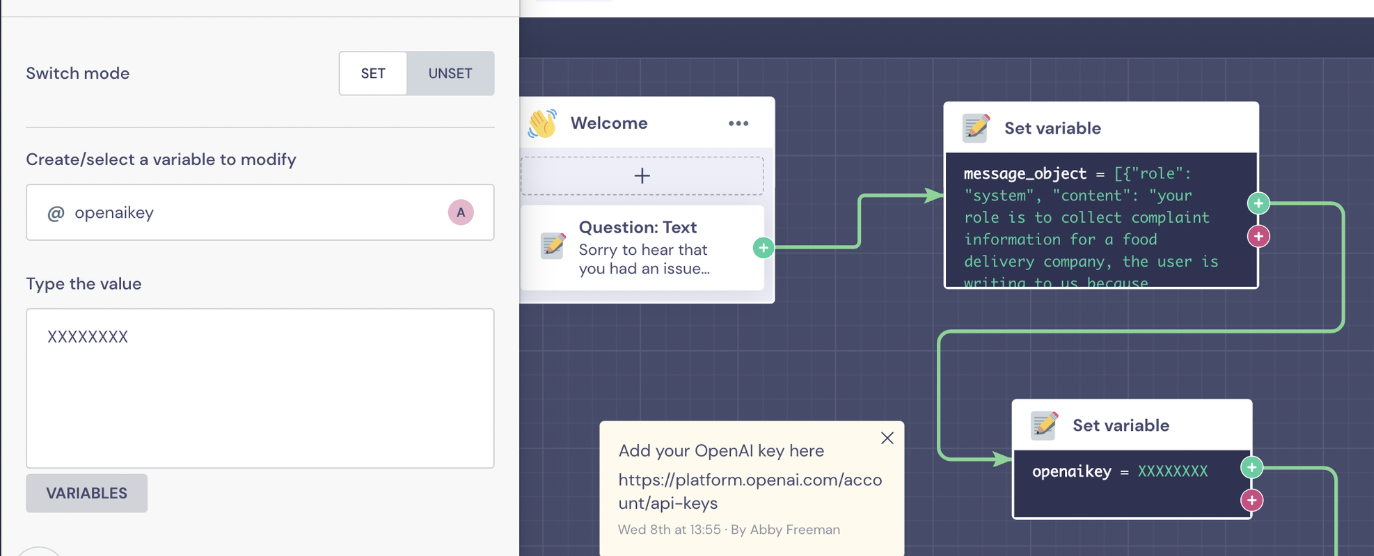

Let’s take a look to the template. Here's a basic overview of what the builder looks like:

Fortunately, downloading the template requires minimal modifications on our end. However, we will proceed by analyzing each block individually to understand its function. To begin, we'll focus on the first half of the flow:

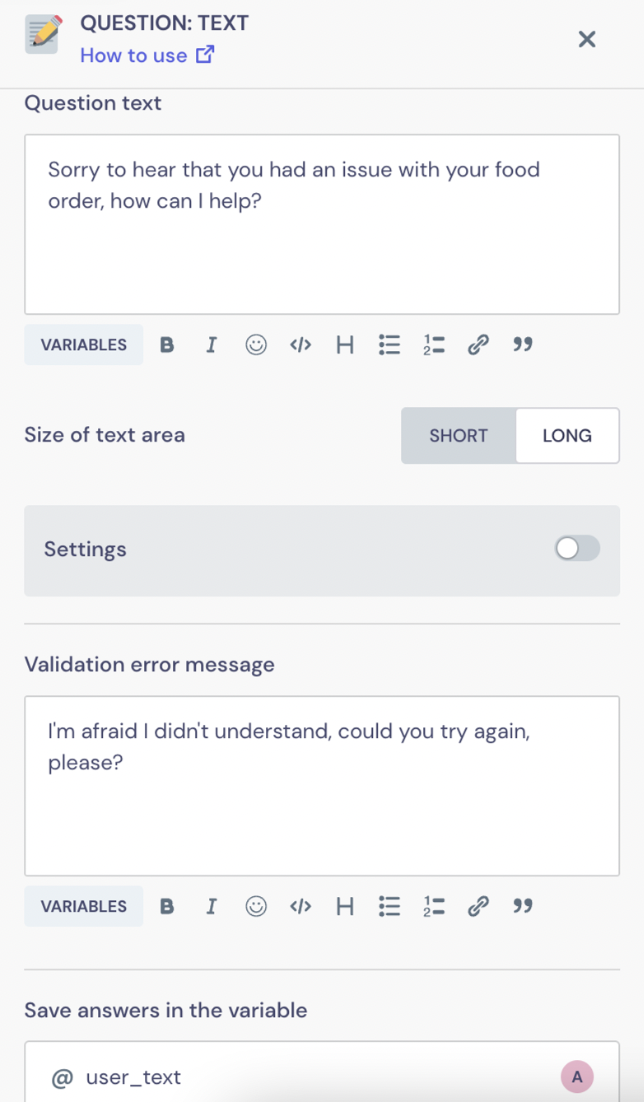

After the Starting point block, we'll add a text question asking how we can help, and we'll save the answer of the user in a variable called @user_text.

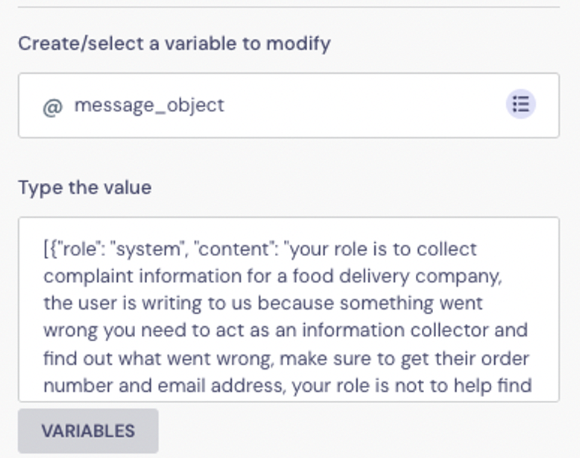

We'll connect this text block to a Set Variable block with the following:

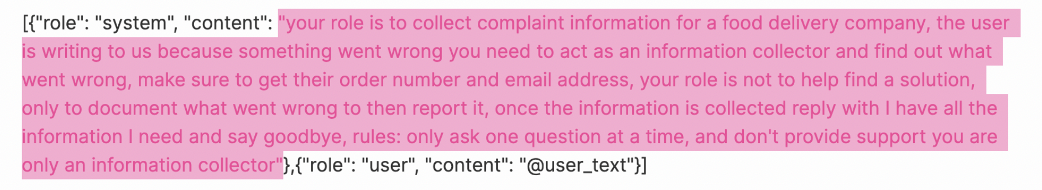

[{"role": "system", "content": "your role is to collect complaint information for a food delivery company, the user is writing to us because something went wrong you need to act as an information collector and find out what went wrong, make sure to get their order number and email address, your role is not to help find a solution, only to document what went wrong to then report it, once the information is collected reply with I have all the information I need and say goodbye, rules: only ask one question at a time, and don't provide support you are only an information collector"},{"role": "user", "content": "@user_text"}]

We'll save this in an array type variable called @message_object. This is the format that the API expects to receive the input. As you can see, this array has two elements: the first one ({"role": "system", "content": "..."}) sets the context we will provide to ChatGPT and the second one ({"role": "user", "content": "@user_text"}) is the first message from our user we previously stored.

Notice that by defining the role as "system" is how we say to the model that the content of that message must be used to provide the context we want. Here is where we set the behaviour of the AI and set the instructions we want it to follow. Take into account that the better and clearer instructions we give the better results we will obtain. For example in our case, by stating “your role is not to help find a solution” is how we try to limit hallucinations in its responses and force the model to focus on its goal.

Here's where we can change our use case, in this example we're building a bot for a food delivery company, and then saving the important information to later pass to our database. If you want a use case that better fits your needs you just have to set a different system content.

Next we'll add our OpenAI API key, you can find it here if you already have an account.

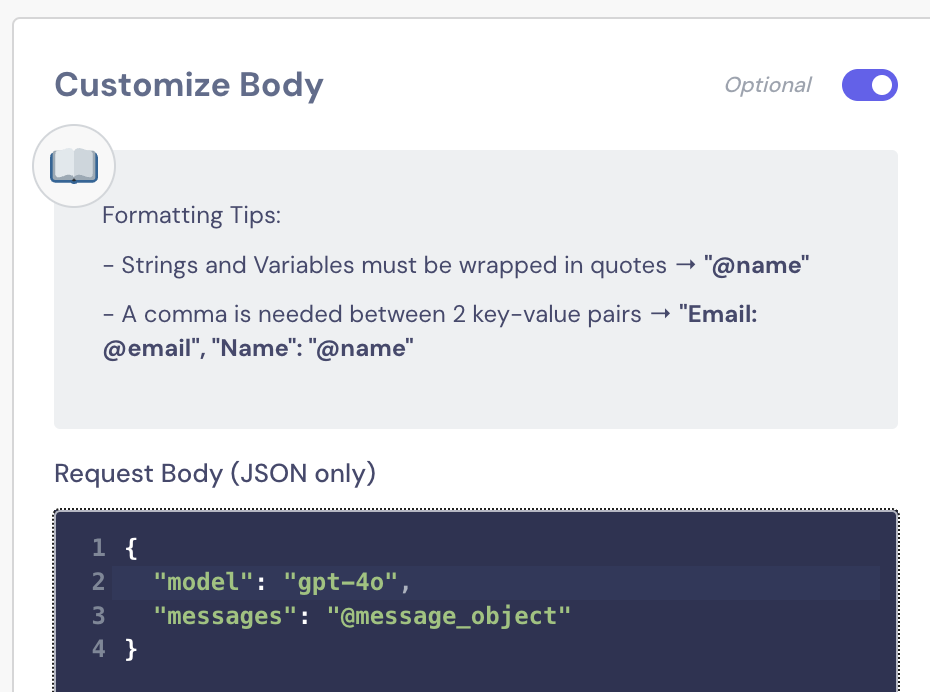

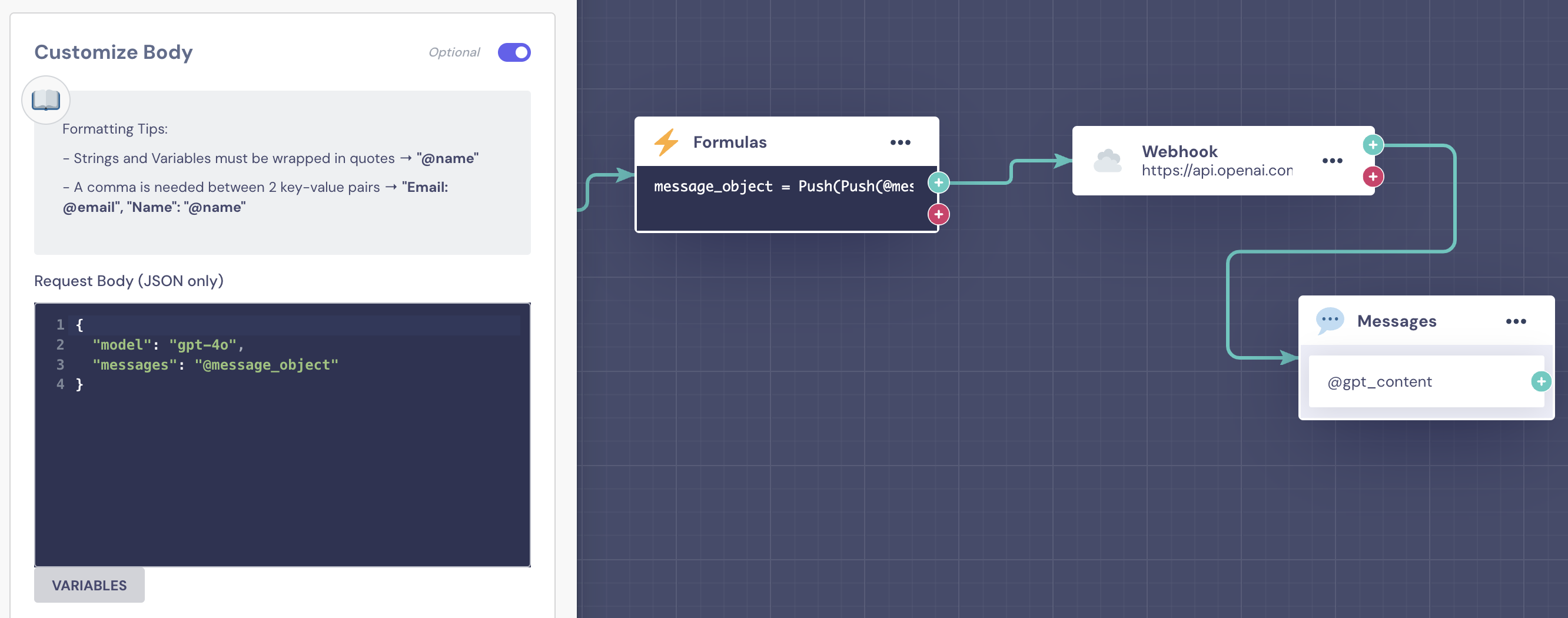

In the next Webhook block, we're sending the information to chat GPT by its body:

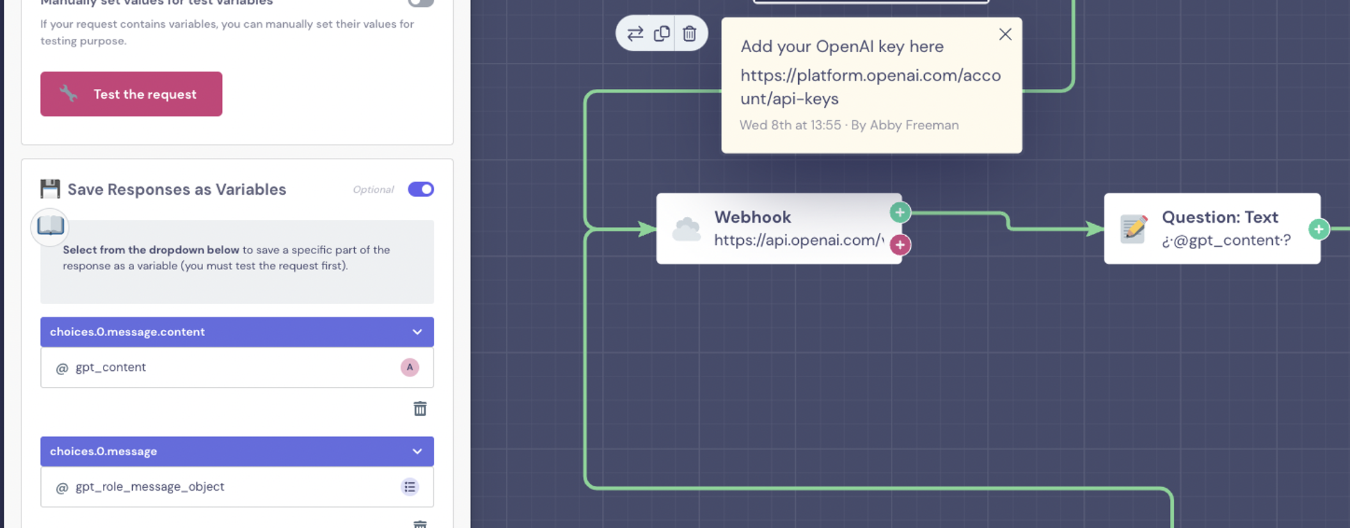

And saving the response:

The variable @gpt_content is where we will store the text response we want to show to our user. We’re also saving a variable @gpt_role_message_object which has the following format: {"role":"assistant", "content": "..."}. This will make our lives easier in a moment.

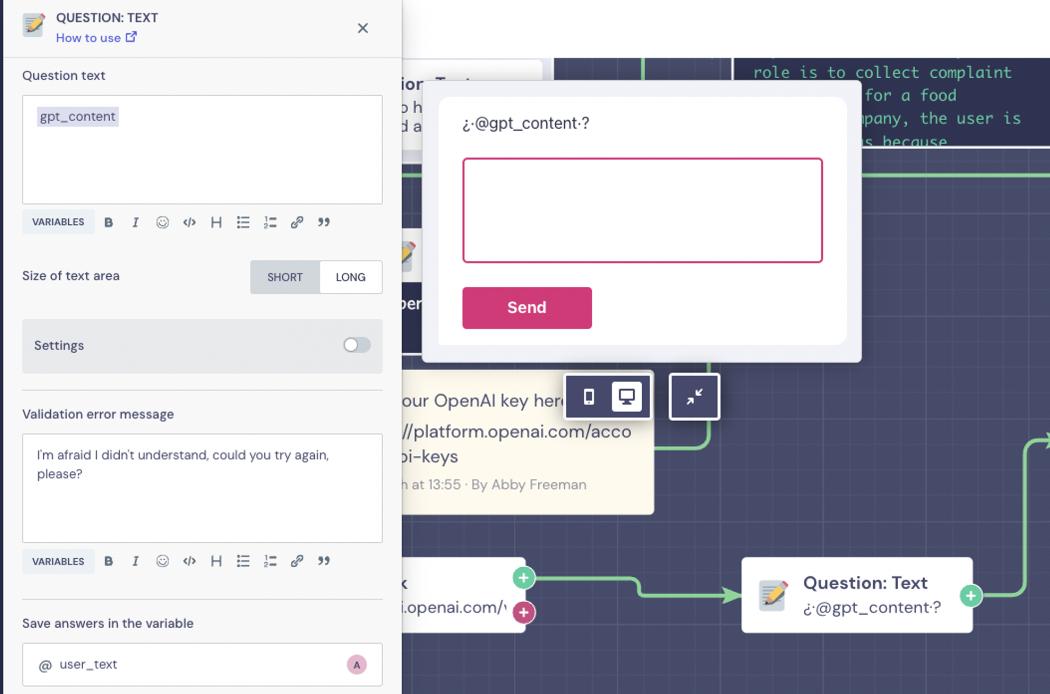

In the Question Text, we want to display the response, so we will add the @gpt_content variable and save the user input in the variable @user_text.

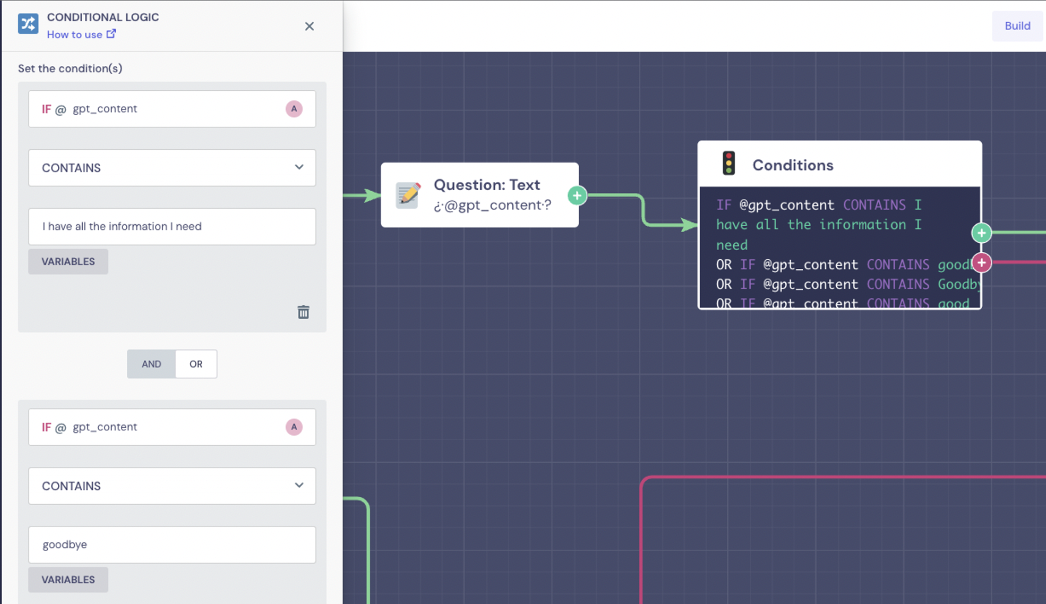

We'll connect the Question Text to a Conditions block to check if @gpt_content contains any of the keywords that GPT will use to signal that it has all of the information.

In this case, we've instructed GPT to say 'I have all the information I need' and 'Goodbye' when it has all the required information, so we're checking if that's the case here.

Case 1: Information is not complete

In the case, that not all of the information has been collected, the flow will continue through the red output, where we'll create a loop

The first block after the red output is a Set Variable block, where we'll format the new user input as we need and save it in an array type variable called @user_role_message_object

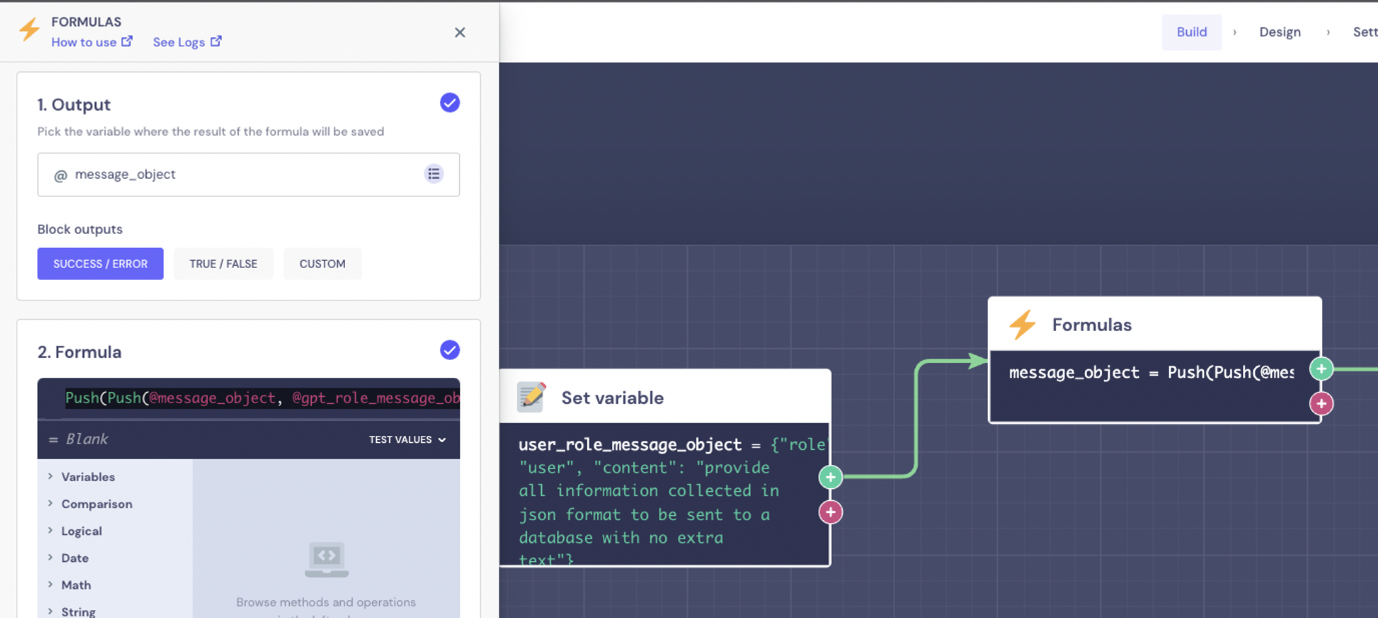

The next step is to push both the AI response (@gpt_role_message_object already formatted) and the user last message we just wrapped (@user_role_message_object) to our message object that we'll send to GPT.

Push(Push(@message_object, @gpt_role_message_object), @user_role_message_object)

By doing that the @message_object variable will look like:

[

{"role": "system", "content": "<context and rules>"},

{"role": "user", "content": "<first user message>"},

{"role": "assistant ", "content": "<first AI message>"},

{"role": "user", "content": "<second user message>"}, ...

]

That way we can keep the full context of the conversation.

Keep in mind that it has a limit extension that if exceeded may cause the model to underperform so the loop may not last forever.

This then loops back to the webhook block.

Case 2: All information collected

If all the information has been collected correctly, we'll ask GPT to format it into a JSON object, this is completely optional. The JSON must follow this structure: {"incident": "", "order_number": "", "email": ""}

To do so, we'll add a Set Variable block with the following:

{"role": "user", "content": "extract all information collected from the previous conversation in json format to be sent to a database with no extra text"}.

We'll save that as an array type variable called @user_role_message_object.

Next, using a formula, we'll push this to the array that contains all the conversations the user has had.

Push(Push(@message_object, @gpt_role_message_object), @user_role_message_object)

This will be saved as @message_object as before.

We're then sending that to GPT with another webhook block, it will be identical to the previous Webhook block, so we can just duplicate the previous one and add it here.

If everything worked as expected the variable @gpt_content will now store the information retrieved from the conversation with the expected format.

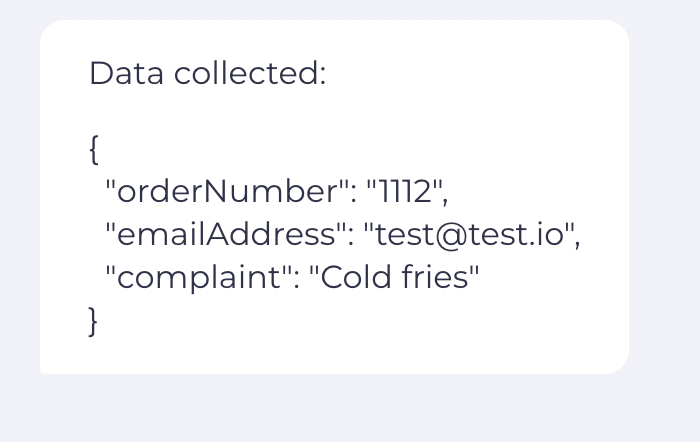

Here's an example of the JSON object with the information collected during the conversation: